A Look Ahead: What Does the Spread of AI Mean for Mental Health

On October 10th, the world observes an important date on the calendar: World Mental Health Day. This day provides an opportune moment to reflect upon the impact of artificial intelligence on our lives and, specifically, on our mental health.

In recent years, AI has made remarkable strides in the field of mental health research, diagnostics, and treatment. These advancements hold the potential to revolutionize the way we assess and care for mental health concerns.

However, with these promising prospects come a collection of unique caveats and concerns. Let’s embark on a journey to understand the multifaceted role of AI in mental health, its promises and its perils, and contemplate how we can strike a balance that promotes better mental well-being for all.

AI in Mental Health Research

In recent years, AI has been revolutionizing the way we approach healthcare and the understanding of mental health issues. Leveraging digitized healthcare data such as EHR, medical images, and clinical notes, AI-based solutions help automate routine tasks, provide support to clinicians, and gain deeper insights into mental health disorders.

One notable area where AI is making strides is in dementia diagnosis. Researchers at the University of Cambridge and The Alan Turing Institute are at the forefront of this innovation, developing predictive and prognostic machines (PPMs) designed to detect early signs of cognitive decline related to dementia. The ultimate goal of the project is to transform PPMs into fully deployable clinical decision support systems that will utilize less invasive data sources like cognitive testing, making the diagnostic process more patient-friendly.

This innovative approach enhances patient well-being by minimizing invasive procedures and optimizes healthcare resource allocation. The resulting AI-driven systems could assist doctors in making precise diagnostic and treatment decisions, reducing healthcare costs, and expediting the development of advanced dementia therapies.

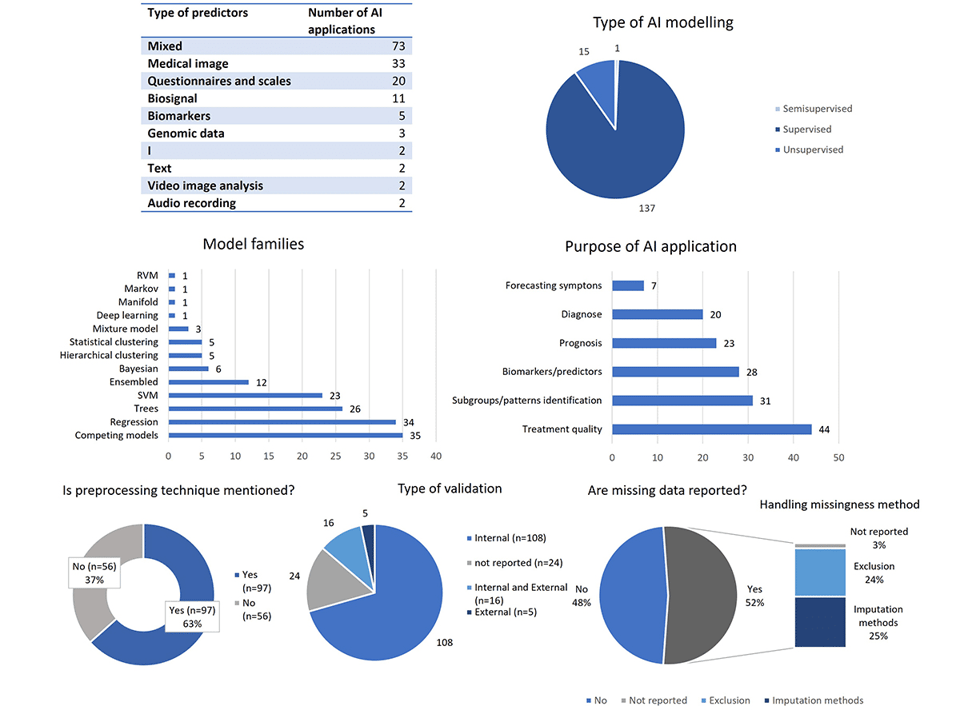

However, it's crucial to acknowledge that the integration of AI into mental health research is not without its criticisms. A recent study titled "Methodological and quality flaws in the use of artificial intelligence in mental health research: a systematic review," conducted by experts from the Polytechnic University of Valencia, Spain in collaboration with WHO, critically examined the use of AI for mental health disorder studies between 2016 and 2021.

The findings of this study highlighted methodological and quality flaws in the application of AI in mental health research. It pointed out concerns regarding the unbalanced use of AI primarily in studying depressive disorders, schizophrenia, and other psychotic disorders. Additionally, the study raised issues related to transparency, data validation, and collaboration within the AI research community.

AI in Mental Health Diagnostics: The Case of PTSD

Post-Traumatic Stress Disorder, or PTSD, is a condition that affects millions of people worldwide. However, diagnosing PTSD accurately and efficiently has been a longstanding challenge. Fortunately, recent advancements in artificial intelligence and machine learning are paving the way for more effective PTSD diagnosis.

In 2019, researchers at NYU Langone Health made significant strides in leveraging artificial intelligence for the diagnosis of PTSD. Their first study examined vocal patterns in veterans with and without diagnosed PTSD. The resulting algorithm identified vocal characteristics linked to PTSD with an impressive 89% accuracy rate. In a second study the team utilized AI to uncover potential blood markers for PTSD. This groundbreaking work opened doors to the possibility of a PTSD screening blood test.

Last month, a comprehensive synthesis and review of 41 studies shed light on the potential of AI to transform PTSD diagnosis. The studies analyzed in this review collectively demonstrate that AI can significantly improve the accuracy and effectiveness of PTSD diagnostic approaches. From neuroimaging techniques, structured clinical interviews, and self-report questionnaires to innovative approaches such as social media analysis and biomarker identification, AI has shown its potential to radically improve the way we identify and address PTSD.

Despite the remarkable progress in the field, several barriers still hinder the widespread clinical adoption and realization of AI's full potential in early PTSD diagnosis. Ethical and privacy considerations are paramount, as the use of sensitive data for diagnostic purposes raises important questions about patient confidentiality and data security. Additionally, the absence of standardized regulations poses a challenge, as the field grapples with the need for guidelines to ensure the ethical and responsible use of AI in mental healthcare.

Use of AI in Mental Health Therapy

A global study co-led by Harvard Medical School and the University of Queensland, reveals that 50 percent of the global population will encounter at least one mental disorder by age 75, underscoring a massive unmet need for treatment and support.

Considering the staggering prevalence of mental health disorders worldwide, it's no surprise that in recent years, there has been a surge in the development of mental health tools leveraging the power of artificial intelligence.

AI-based Apps for Mental Healthcare

In response to the pressing issue of unmet mental health needs, AI-powered mental health apps are stepping up to fill the gap. These apps leverage advanced algorithms and data analysis to extend access to mental health care beyond traditional in-person therapy.

Among these AI-based solutions are platforms like Mindmate, Endel, BetterHelp, Talkspace, and Wysa. Wysa, for instance, acts as an AI-guided mental health support system, serving as the initial step in mental health care. This app uses natural language to engage users in conversations about their mental state, providing solutions to reduce anxiety and reframe thought patterns. Techniques such as relaxation and deep-breathing exercises are offered to bridge the gap between individuals and available mental health care resources.

Sound, with its immersive qualities, holds transformative powers that have been recognized across cultures and ages. It has the power to affect our sleep, mood, concentration levels, blood pressure, and more. Recognizing this, Endel harnesses the transformative power of sound combined with cutting-edge AI technology. It creates real-time, personalized soundscapes tailored to the user's environment and needs. Whether it's to relax, focus, or drift into sleep, Endel's AI-driven sound environments adapt to offer the optimal auditory experience for the moment, grounding the user and promoting better mental health.

Rask AI: What advice can you give for people to maintain their mental health in the years 2023-24?

Wearable AI for Mental Health

In a paradigm shift away from traditional mental health assessment methods, some AI-powered mental health solutions rely on the readings of wearable devices, interpreting bodily signals via sensors. Wearable devices like the Apple Watch provide a unique opportunity to remotely assess psychological states without the need for conventional questionnaires or in-person evaluations.

The study, led by Dr. Robert P. Hirten at the Hasso Plattner Institute for Digital Health at Mount Sinai, had the objective of “assessing whether an individual’s degree of psychological resilience can be determined from physiological metrics passively collected from a wearable device.” The utilized dataset comprised 329 healthcare workers who wore Apple Watch Series 4 or 5 devices that continuously measured heart rate variability and resting heart rate. Simultaneously, surveys were conducted to gauge resilience, optimism, and emotional support.

The researchers employed machine learning models to analyze this wealth of data and predict individuals' levels of resilience and psychological well-being. The findings point at the feasibility of evaluating psychological characteristics using data from wearables, which marks a pivotal advancement in mental health assessment.

AI in Mental Health Therapy: a Transformative Solution…

Harnessing the power of artificial intelligence in mental health treatment offers a multitude of advantages. While not an exhaustive list, here are some key positive aspects that are mentioned across multiple relevant studies and research articles on integrating AI into mental healthcare:

- Reducing stigma. AI-powered virtual therapists and chatbots offer discreet mental health support, allowing individuals to seek help without disclosing their condition to another human.

- Enhancing accessibility. Conditions like depression or autism can make human interactions challenging. AI can provide support, diagnosis, and therapy options through apps and chatbots, making it more accessible for those who struggle with human interaction.

- Effective communication. Virtual interviewers and robot therapists have shown promise in encouraging patients to open up about their conditions and improving engagement in talk therapy. They can bridge the communication gap, especially in cases like PTSD.

- Addressing shortages. With a global shortage of mental health practitioners, AI can step in to diagnose, treat, and provide support. Apps and chatbots can reach individuals in need, extending mental health care to more people.

- Reducing bias. AI can provide impartial diagnoses by considering a wide range of factors, including symptoms, genetics, and wearable data. This reduces the impact of human bias in the diagnostic process.

- Enhancing compliance. AI can help ensure that patients adhere to their treatment plans through reminders, tracking, and personalized interventions, leading to more effective outcomes.

- Personalized treatment. AI offers the potential to tailor treatment plans for various mental health conditions by continuously monitoring symptoms and treatment responses.

…or a Potential Minefield?

It’s no secret in the medical field that artificial intelligence comes with its own pitfalls that demand our attention:

- Diagnostic сomplexities. Mental health conditions are intricate and often lack objective numerical data for diagnosis. Many AI studies are retrospective and lack external validation, casting doubt on their diagnostic accuracy and reliability.

- Privacy and data misuse. AI's data collection capabilities raise privacy concerns, as personal health data can be vulnerable to tracking and misuse by third parties. Protecting patient data is paramount but challenging in an AI-driven landscape.

- Bias amplification. AI algorithms can perpetuate biases present in their training data, which can lead to discriminatory outcomes in mental health treatment, exacerbating existing disparities.

- Over-mechanization. Sophisticated AI tools may lead to over-mechanization, risking the replacement of human care with automation. Maintaining the human touch in healthcare is essential for patient well-being.

- Regulatory challenges. The lack of comprehensive regulatory guidelines poses significant challenges in overseeing AI applications in healthcare.

- Patient-provider relationship. Over-reliance on AI tools may strain the patient-provider relationship, potentially leading to technology addiction and decreased access to in-person healthcare services.

AI in the Workplace: the Impact on Mental Health

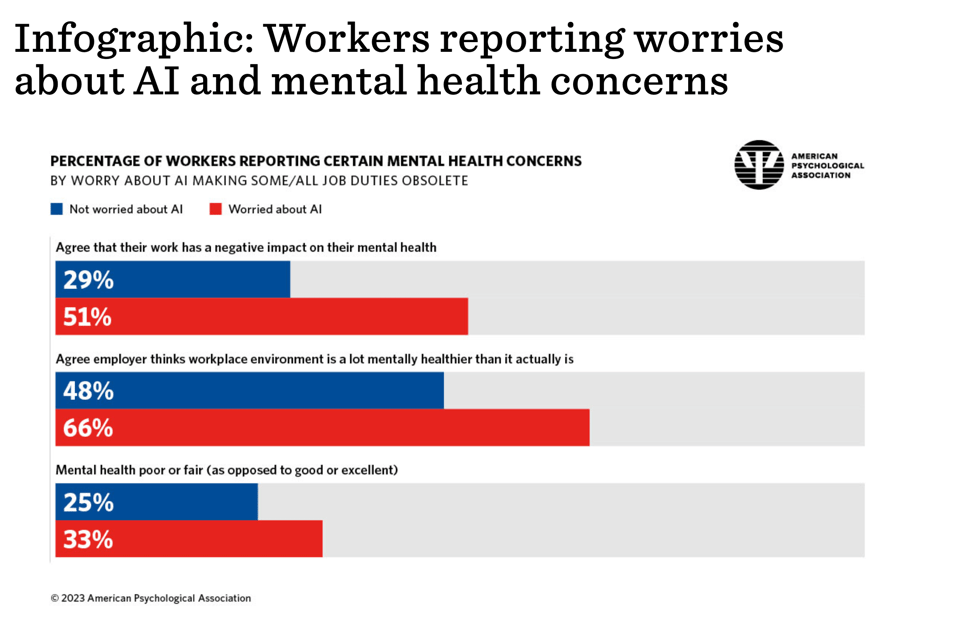

AI's growing presence in the workplace has prompted understandable concerns among employees, sparking a phenomenon often termed "AI anxiety." The APA's 2023 Work in America survey highlights a noteworthy connection between these worries and employees' psychological well-being. Almost 38% of workers expressed concerns about AI potentially rendering some or all of their job tasks obsolete. Alarmingly, these apprehensions correlate with indicators of diminished mental and emotional well-being.

Those worried about AI were more likely to report negative mental health impacts, believe their workplaces were less mentally healthy than perceived, and describe their general mental health as poor or fair. Additionally, these concerns were associated with feelings of not being valued, micromanagement, and worries about technology taking over their roles.

Opinions Among the Population

And what about the public? Public opinions on the integration of artificial intelligence into mental health care reveal a mix of reservations and hopes.

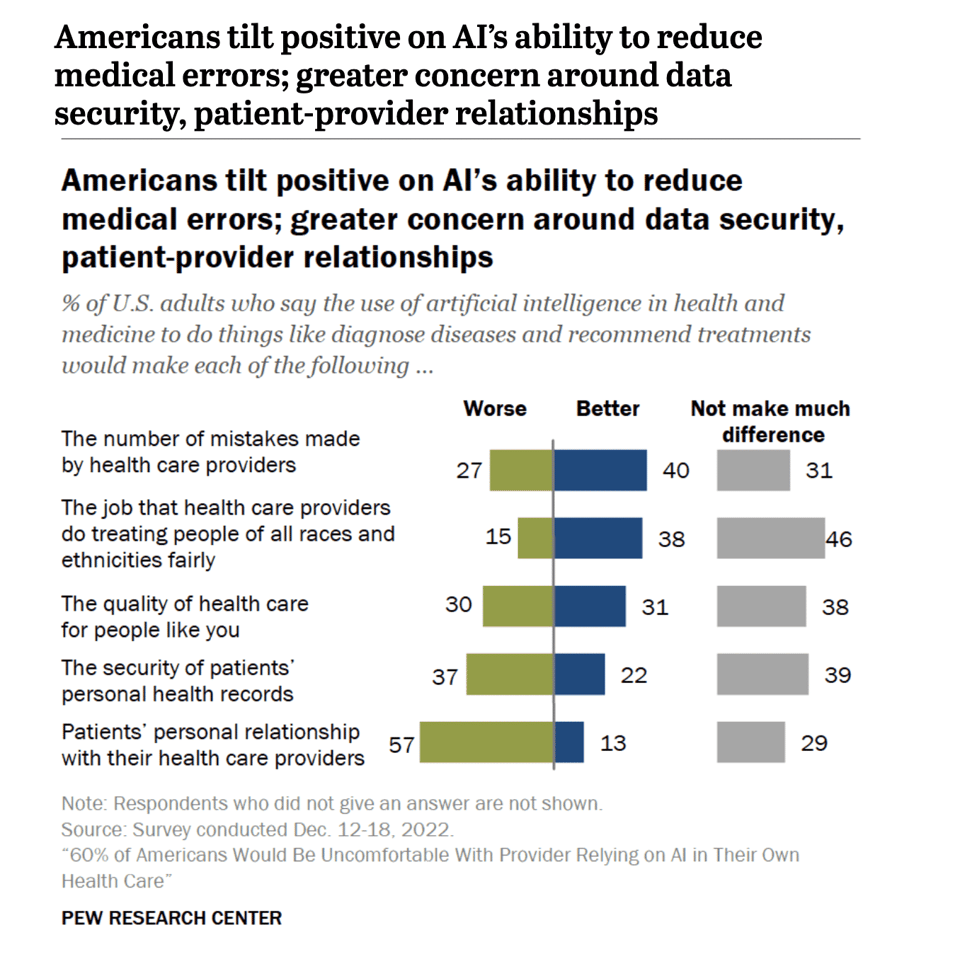

A recent survey conducted by the Pew Research Center delved into the sentiments of Americans regarding AI's role in health and medicine, including its potential impact on mental health. The findings unveiled significant discomfort among the populace when it comes to AI involvement in their own health care. Approximately 60% of U.S. adults expressed unease with the notion of their health care providers relying on AI for tasks like disease diagnosis and treatment recommendations, whereas only 39% reported feeling comfortable with this prospect.

One factor influencing these sentiments is the public's skepticism about AI's capacity to enhance health outcomes. The survey disclosed that merely 38% believed that AI, when employed for tasks such as disease diagnosis and treatment recommendations, would lead to better health outcomes for patients overall.

On the positive side, a larger segment of the population believed that AI implementation would reduce rather than increase the number of errors made by healthcare providers (40% vs. 27%). Additionally, among those who identified racial and ethnic bias as a concern in healthcare, a majority anticipated that AI could ameliorate the problem, with 51% believing it would lead to improvements compared to 15% who thought it might exacerbate the issue.

Furthermore, security concerns emerged as 37% believed that AI integration in health and medicine could compromise the security of patients' records, while 22% held the opposite view, seeing it as an enhancement to security. These contrasting perspectives underscore the complex nature of public opinions surrounding AI's role in mental health care.

Food for thought

Rask AI: Given the upcoming Mental Health Day, what would be your key message or recommendation regarding the balanced use of AI to ensure it benefits rather than hinders mental well-being?

AI developers bear the duty of crafting systems with user-centric designs that prioritize transparency, privacy, and trustworthiness. Employers who integrate AI into the workplace must ensure their employees receive adequate training and support to navigate this evolving technological landscape, while vigilantly monitoring for any adverse psychological effects. Regulatory bodies play a pivotal role in establishing standards and guidelines to govern the development and deployment of AI, safeguarding individuals' psychological welfare.

Yet, the broader responsibility extends to society itself, as we collectively navigate the profound impact of AI on mental health. Engaging in open dialogues, advocating for ethical AI practices, and advocating for policies that uphold individuals' rights and well-being are all essential contributions we can make as a society.

Do you agree or disagree? We would love to hear your opinion on the application of AI in the field of mental health. If you have a story to share, drop us a line at [email protected].

Read more insights on the ways AI is changing the very concept of living here.

Rask%20Lens%20A%20Recap%204.webp)